tt

tt

tt

tt

tt

tt

Technology

•

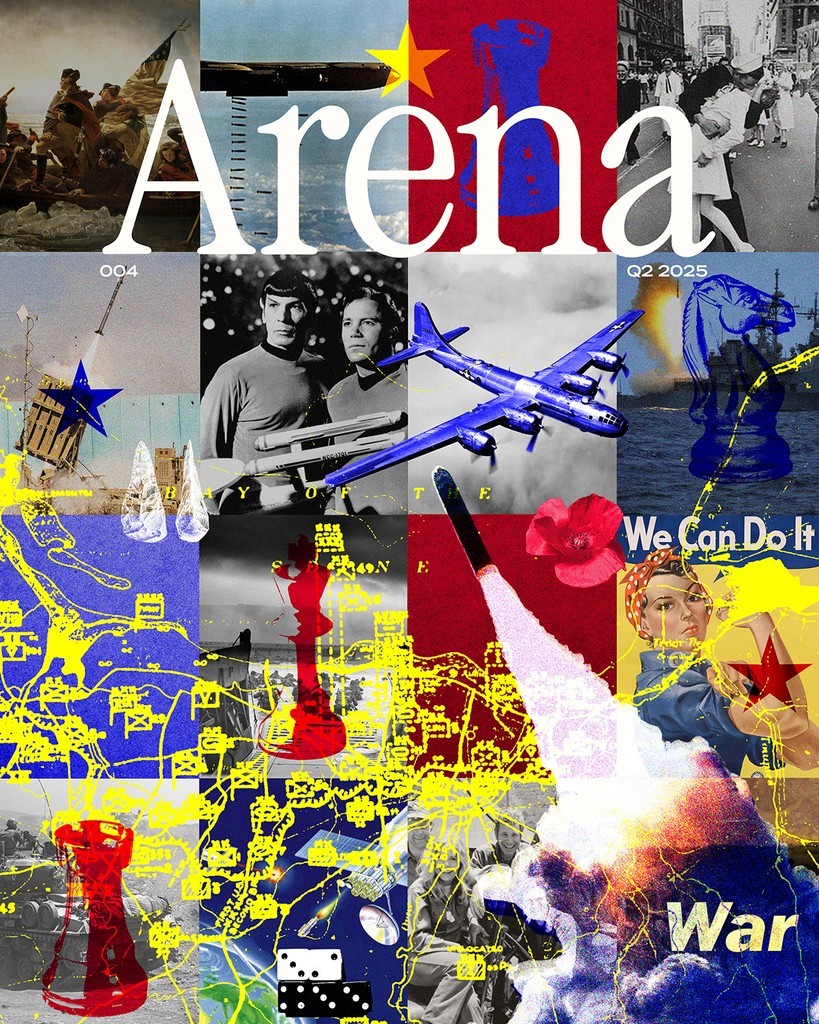

Always Listening

Signals Intelligence as a Framework for Our AI World.

Get the Mag in Print.

Arena publishes four stunning print editions per year, full of stories just like this one on American technology, capital, and industry.

When I was a Second Lieutenant in the U.S. Marine Corps training to become a Signals Intelligence Officer, I spent many months working in a dark, windowless room in Layton Hall, a building in a secret town in Virginia hosting a small intelligence base named after a man most Americans have never heard of. Edwin Layton was not a fleet commander or a battlefield tactician — he never commanded on the bridge of an aircraft carrier or appeared in recruitment posters. He was an intelligence officer, serving from 1924 to 1959, and he helped win the single most decisive naval battle in American history: Midway.

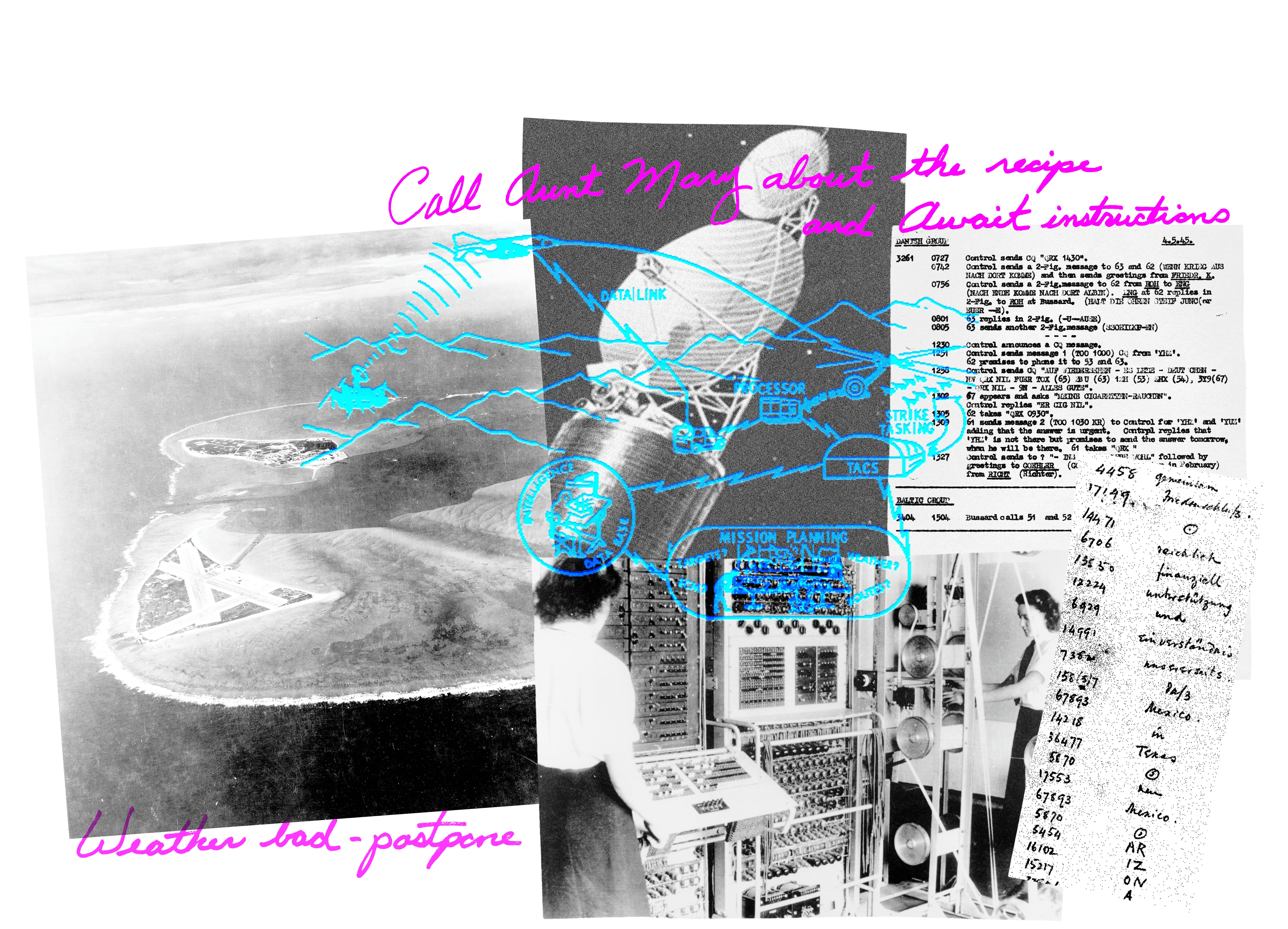

Layton served as the chief intelligence officer for Admiral Chester Nimitz, the Commander in Chief of the U.S. Pacific Fleet, and Commander in Chief of the Pacific Ocean Areas’ naval fleet during the Second World War. In the spring of 1942, American Signals Intelligence (intelligence derived from electronic signals and systems used by foreign targets) — SIGINT — intercepted Japanese communications indicating an imminent offensive against a target referred to only as “AF.” The United States had access to the signals, but lacked certainty about their meaning.

That distinction between access and understanding sits at the heart of intelligence work and is often misunderstood outside it. Signals Intelligence is frequently imagined as a technical feat: codebreaking, interception, and collection at scale. In reality, those capabilities are necessary but insufficient. Signals only become intelligence once a human institution decides what they mean and what to do with them. The defining challenge of SIGINT has always been interpretation.

At Midway, American military analysts debated what “AF” referred to. Some believed it was Hawaii. Others suspected a location in the Aleutians. Acting on the wrong interpretation would have exposed the U.S. Navy’s remaining aircraft carriers to catastrophic loss, effectively ceding control of the Pacific to Japan. There was strong consensus in the Navy brass not to challenge the bureaucracy and not to speculate on alternative theories. It is very hard to project doubt against an admiral or general’s expertise, especially as a junior ranking officer.

The resolution came from analytic judgment and trained minds ready to interpret, doubt, and govern the signals — not from additional collection or more sophisticated cryptography. Consider how this played out at Midway. Edwin Layton and his team understood something fundamental: signals do not exist in isolation. They exist in adversarial environments. The Japanese were listening too — to American intelligence. So as a test, the Americans transmitted an unencrypted message stating that Midway Island’s freshwater condenser had broken and the garrison was running dangerously low on water. Shortly afterward, Japanese intelligence traffic reported that “AF is short on fresh water.” The ambiguity collapsed. AF was Midway, and the course of the war was bent by a few military nerds hunched over primitive machines.

That act — testing an interpretation rather than trusting it — allowed the U.S. Navy to concentrate its forces and ambush the Japanese fleet. The Battle of Midway, which happened three months after Layton’s team decoded “AF,” altered the course of the Pacific War. It is often remembered as a triumph of interception, the covert acquisition of signals and electronic emissions, or cryptography, the breaking of coded communications. But it was greater than both of that; it was a triumph of disciplined doubt. The codebreakers proved themselves right in rejecting the common belief that AF referred to Hawaii or the Aleutians.

In the U.S. military and intelligence community, SIGINT is one of several foundational intelligence disciplines, alongside human intelligence (HUMINT), open source intelligence (OSINT), imagery intelligence (IMINT), and a host of others. Each exists to reduce a different kind of uncertainty. SIGINT focuses on communications and electronic emissions: radio transmissions, radar signals, satellite links, digital traffic, and the metadata that accompanies them. The National Security Agency (NSA) is the country’s primary SIGINT organization and practitioners throughout the military and intelligence community mark their work with logos, slogans, and patches denoting the ways in which we “are always listening.” Military organizations prefer skulls and other terror-inducing mottos. But perhaps nothing is more frightening than knowing in an Orwellian sense that everything you are saying can be heard and tracked.

In our hyper-technological age, digital enemy communications are the most critical and valuable type of intelligence, often classified at the Top Secret level. Due to both the scale of data produced and the sheer speed with which we communicate now, the 21st century has thrust SIGINT to the forefront. Our tools and tactics may have evolved over time, but the basic discipline — “trust but verify” — remains the framework that allows us to interpret what is real, what is fake, and, most importantly, how to act on it. Signals have changed dramatically since 1942, as signals data now flows in petabytes rather than Morse code dots, but its disciplined analysis holds its core value.

Institutional Overconfidence

Among the many concerns raised about artificial intelligence — bias, fairness, ethics, robustness — there is one that deserves sharper attention: the risk not simply of hallucination or error, but of institutional overconfidence arising when large systems produce authoritative-sounding outputs without clear human accountability. This is playing out today: the Bank of England and creditors in the UK are using AI to make creditworthiness decisions for consumers, often accepting the algorithm’s output without meaningful review. In fact, I’d argue institutional overconfidence is the most dangerous failure mode of AI. It’s particularly bad when systems that feel authoritative obscuring who, exactly, is responsible for interpreting their outputs.

Midway reveals why this matters. The U.S. Navy could only act decisively because Layton’s team not only intercepted enemy signals, but owned the interpretation, tested hypotheses, and insisted that uncertainty be explicit. In contrast, many modern AI systems produce conclusions with persuasive fluency while hiding uncertainty, sources, and interpretive context.

Recently, in the civilian world, a passenger relied on Air Canada’s website chatbot for information about bereavement fares. The chatbot confidently told him he could apply for a refund after travel — directly contradicting the airline’s actual policy. When he sought the refund, Air Canada refused and even argued that the chatbot was effectively separate from the company. A tribunal rejected that logic and held the airline responsible for what its bot had said. The failure was not that the chatbot was wrong, but that no one could identify who was responsible for its answer. This creates a psychological and institutional trap: users and organizations begin to trust the answer without considering its provenance or limitations. Air Canada is just a civilian airline, but it’s also a typical bureaucracy struggling to understand how to integrate AI into decision-making.

Now consider a military analogue. Russia has been publicly rolling out an AI-enabled command-and-control decision-support system called Svod, explicitly designed to fuse disparate intelligence inputs into a single operational picture for commanders and speed the decision cycle. Russian reporting describes Svod as giving officers real-time access to maps, satellite imagery, weather, and “air and ground situation” data inside a protected information space, with trial “combat operations” reportedly conducted in at least one troop grouping and a plan for broader implementation. Other reporting describes Svod as aggregating inputs from satellites, aerial reconnaissance, intelligence reports, and open sources, then using automated analysis to help commanders choose courses of action under battlefield pressure. But when Svod recommends a faulty course of action, who is responsible? The commander who followed it, or the system designers who may not fully understand Ukrainian deception or spoofing tactics?

This is exactly where institutional overconfidence can emerge: a fused, authoritative-looking “single picture” can feel like consensus even when it’s built from correlated, incomplete, or adversary-shaped signals — and when the system’s uncertainty (or the fragility of its inputs under electronic warfare and deception) isn’t made explicit, responsibility for interpretation quietly shifts from accountable analysts to an algorithmic dashboard.

The risk of institutional overconfidence appeared in intelligence history long before the age of neural networks. There is the famous idea in statistics: finding the “signal through the noise,” but I fear we’re entering a world where everything appears to be a meaningful signal, and we take those signals for action. Intelligence is the process of going that one step further to refine the signal until we have an answer, beyond a reasonable doubt. A functioning apparatus of humans, along with procedures to check their judgment, is essential in building out AI-forward institutions.

When Interpretation Falters: Lessons from SIGINT History

In November 1983, throughout various European countries, NATO conducted Able Archer 83, a routine exercise involving simulated command post activity and nuclear release procedures. To Soviet observers, however, the signals produced by the exercise looked strikingly like preparations for an actual first strike. Intercepted communications and unusual radio traffic — harmless to Western planners — were read through a lens shaped by recent Cold War tensions, like the massive increase in encrypted communications between the US and the UK. (These ciphered comms were actually a discussion about Grenada, but the sudden increase was abnormal for Soviet listeners)

Listening in, the Soviet leadership grew spooked by how realistic the exercise appeared. Coded communications and radio silences — standard military procedures during exercises — were read as genuine operational security measures. (The US military now often announces “exercise, exercise, exercise” before simulations to prevent precisely this kind of confusion.) Soviet institutions, perpetually paranoid and convinced that the threat was real, responded in turn: calling up forces, dispersing nuclear assets, and scrambling fighter jets.

The result was not a clear picture of NATO’s intentions, but intensified fear and misinterpretation. The world nearly stumbled into nuclear war — neither side wanted war, but ambiguous signals were interpreted with excessive confidence and insufficient skepticism. In the absence of mechanisms for adversarial hypothesis testing (asking “could this be an exercise?”), institutional assumptions hardened into existential interpretations. No single Soviet analyst “owned” the judgment that these signals were just an exercise; confidence grew through collective momentum rather than critical evaluation.

A decade earlier, Israeli intelligence faced the mirror image of this problem. While the Soviets saw threats where none existed, Israeli analysts dismissed genuine warnings because they contradicted institutional doctrine.

In October 1973, Israeli intelligence received multiple indicators suggesting that Egypt and Syria might attack. The signals were there — SIGINT, human intelligence, and other data like troop movement, leadership locations, and unique press articles — in retrospect, unambiguous. What went wrong was interpretation. The Israelis believed that Egypt would not act until they had secured air superiority through Soviet aircraft, and this assumption blurred the concrete information intelligence had gathered.

Back in 1972, the Israelis had secretly connected listening devices to Egyptian communications in the Sinai. To avoid detection, the devices remained off unless IDF command gave an order to activate them. During the build-up to the surprise attack in which the Egyptians and Syrians launched a coordinated offensive against Israel, controversial General Eli Zeira, who ran the intelligence directorate, refused to turn on the system. When he eventually allowed a ten-hour collection window, it picked up nothing unusual, and he ordered it switched off. By doing so, he missed the crucial signals broadcast in the hours that followed.

Zeira was following the prevailing assumption that Egypt would only attack once it had Soviet aircraft capable of providing air superiority. This thinking was doctrinal, and the institution failed to challenge it. Though the Israelis had the means to collect further intelligence — and act on it — they squandered both. On Yom Kippur, October 6, 1973, the holiest day in the Jewish year, Egypt and Syria invaded.

These failures underscore a core insight of intelligence practice: data does not interpret itself. Interpretation reflects assumptions, and unchecked assumptions can drown out valid signals.

Signals and AI Today: What Modern Research Shows

The analogies between SIGINT failures and modern AI are not merely rhetorical. Research into large language models (LLMs) reveal concrete manifestations of the same interpretive risks that plagued Cold War intelligence analysts.

One of the most studied failure modes in LLMs is hallucination, where the model produces text that is fluent and plausible but factually incorrect. Hallucination arises not from malice but from the training process itself: models are optimized to produce coherent completions, not to admit uncertainty. As researchers at OpenAI explain, LLMs frequently “guess when uncertain, producing plausible yet incorrect statements instead of admitting uncertainty”— a dynamic rooted in how they are optimized for performance rather than reliability.

Comprehensive surveys of LLM behavior confirm that hallucination is widespread, persistent across model families, and context-dependent. Even state-of-the-art systems can still produce highly confidently wrong outputs when given carefully grounded prompts.

Hallucination is not just a technical nuance. In critical domains like healthcare or law, even rare confident falsehoods can have disproportionate consequences. Research classifying hallucination types in medical and life sciences contexts finds that models produce outputs that are “factually incorrect, irrelevant, or misleading” — all of which undermine trust and efficacy in high-stakes environments. The parallel to SIGINT is clear: like intercepted signals, LLM outputs can be abundant, coherent, and superficially persuasive while masking fundamental uncertainty. In the SIGINT world, interpreters hold security clearances and receive formal training; with AI, these systems are deployed on a civilian populace and legacy institutions that lack any framework for systematic doubt or verification. We have millions of GPUs and the greatest hardware mankind has ever built, yet we don’t have the human software in our own gray matter to match the task.

And the problem is getting worse. As language models become more sophisticated, they become better at producing fluent content that feels authoritative — regardless of whether it’s accurate. Scholars call this AI trust paradox: advancements in fluency make outputs harder to distinguish from truth, even when they are misleading.

This paradox reflects the same dynamic that undid analysts before the Yom Kippur War. Confidence in surface coherence masked deeper uncertainty, leading institutions to treat preliminary assessments as confirmed intelligence. With AI, the trap is structural: every response we get is not an answer but a probability-weighted directional guess at what may or may not be correct.

What distinguishes successful SIGINT practice is not raw data collection, but interpretive discipline. Intelligence analysts are trained to articulate uncertainty explicitly rather than obscure it, test alternative hypotheses rather than converge prematurely on a single interpretation, expect adversarial behavior, and anchor every conclusion to specific human judgment.

Raw data collection matters far less than interpretive discipline. These practices reflect hard lessons from Midway, Able Archer, and Yom Kippur, forged through catastrophic near-misses and actual failures. The contrast with AI is stark: when systems produce outputs that feel right, organizations may stop asking why they feel right, who is responsible for verifying them, and what assumptions underlie them.

Another growing body of AI research focuses on calibration: whether a model’s stated or implied confidence matches its actual likelihood of being correct. Across domains, researchers find that large language models are often miscalibrated — particularly in complex or unfamiliar tasks. Models express high confidence when wrong and uncertainty when right, a pattern that worsens under distribution shift.

From an institutional perspective, this is dangerous. Humans are notoriously deferential to confidence cues. When AI systems produce decisive-sounding answers, organizations treat them as authoritative — even absent any mechanism to verify or challenge them. Over time, responsibility migrates from human judgment to system outputs, creating precisely the kind of diffuse accountability that intelligence organizations worked for decades to avoid.

In SIGINT, analysts are trained to separate confidence from certainty. In AI deployment, that distinction is often erased.

Feedback Loops and the Collapse of Independent Signals

Research on model feedback loops reveal another parallel. As AI-generated content proliferates online, models trained on scraped web data risk learning from their own outputs. This phenomenon, called model collapse, reduces diversity, amplifies errors, and narrows the space of possible interpretations.

Intelligence agencies learned that relying on self-referential signals is a recipe for analytic failure. Independent sources matter not because they add volume, but because they provide friction against shared error. A canonical example is the US intelligence failure surrounding Iraq’s alleged weapons of mass destruction in the early 2000s. Much of the analytic confidence rested on a small number of sources — most notoriously a single human source codenamed Curveball — whose reporting was repeatedly recycled, summarized, and corroborated internally without truly independent verification. AI systems, by contrast, often present internally consistent but externally ungrounded consensus. Multiple “answers” may in fact be variations of the same underlying model judgment. A well-documented example occurred in US federal court in 2023, when lawyers in Mata v. Avianca submitted a legal brief citing multiple prior cases that appeared to corroborate one another. In reality, every cited case had been fabricated by a large language model. The illusion of consensus came from the fact that the model generated several internally consistent but entirely fictional precedents, each reinforcing the same underlying narrative.

Why Technical Fixes Are Not Enough

Much of contemporary AI safety research focuses on improved training, better benchmarks, and mitigation protocols. This work is useful, but it is insufficient on its own to address the problem of interpretation. We need technical and institutional training and controls. Technical enhancements for hallucination detection, uncertainty calibration, and adversarial mitigation can reduce certain errors, but they cannot create interpretive accountability.

Consider the extensive research on hallucination detection and reduction — token-level uncertainty estimation, retrieval-augmented generation, and automated fact-checking frameworks. These improve output quality, but what they cannot do is assign responsibility for interpretation. A more accurate model is just a better signal generator.

In intelligence work, an intercepted signal initiates a structured process of analysis, debate, dissent, revision, and formal assessment. Humans are known to perform better with checklists and clear steps, which is exactly what this process encourages. AI systems collapse this process entirely, presenting outputs directly to users without clear chains of human judgment.

Laplace’s Demon

In the early nineteenth century, the mathematician Pierre-Simon Laplace imagined a hypothetical intelligence — later dubbed Laplace’s demon — that knew the position and momentum of every particle in the universe. Given perfect information and sufficient computational power, this intelligence could predict the future and reconstruct the past with complete accuracy. Nothing would be uncertain; nothing would surprise.

Laplace’s demon is a fantasy, but it remains seductive. In defense and intelligence work, sensor fusion and data integration pursue the same dream: a complete picture of the battlespace or information environment. The difference is that defense institutions have built doctrines around accepting fundamental limits to knowledge. Analysts are trained to operate under uncertainty, to distrust perfect clarity, and to assume that adversaries are manipulating signals.

I fear that the private sector has no such muscle of interpretation. Instead, there is a pervasive assumption that more data and faster models will eventually converge toward omniscience—that the demon is achievable with further scale and capital.

Signals intelligence disproved this long ago. No amount of collection ever eliminated uncertainty. In fact, beyond a certain threshold, additional signals increased confidence faster than they increased understanding. Comprehensiveness was a false idol. This is precisely what is happening with AI today: confidence in model outputs is increasing faster than our interpretive capacity to validate — or invalidate — them.

Emergence, Opacity, and the Limits of Interpretability

Contemporary research on LLMs reveals a troubling paradox: as systems grow more capable, they often become less interpretable in human terms. Studies of emergent behavior show that models develop capabilities — reasoning patterns, internal representations, strategic behaviors — that were neither explicitly programmed nor anticipated by their designers. These behaviors appear abruptly at scale, defying linear expectations.

Interpretability research has made impressive progress in mechanistic interpretability, sparse autoencoders, and feature attribution methods, but even leading researchers acknowledge a fundamental gap. Understanding a model’s internal representations does not translate into understanding why a model produces a particular output in a given context. Insight into the machinery does not guarantee insight into the judgment.

This mirrors a core challenge in SIGINT. Analysts may understand exactly how an intercept was collected, decrypted, and processed, yet still struggle to assess what it means — especially when adversaries deliberately shape signals to mislead. AI systems are not (yet) adversarial in this sense, but they create the same interpretive problem.

Where SIGINT is troubled by deception, AI is troubled by compression. AI systems present outputs as complete narratives. Internal uncertainty, conflicting latent representations, and probabilistic tradeoffs are flattened into fluent text. The result is not mere opacity but a false sense of coherence — outputs that read as definitive answers while concealing ambiguity. This is Laplace’s demon in modern form: the fantasy that enough computation can resolve ambiguity and eliminate the need for interpretive judgment.

Why Laplace’s Demon Still Haunts AI

The enduring appeal of Laplace’s demon is not that it seems achievable, but that it absolves institutions of judgment. If outcomes can be computed, responsibility shifts from decision-makers to systems. Failure becomes a technical malfunction rather than a result of human error.

Signals Intelligence rejected this fantasy out of necessity. Adversaries adapt. Context shifts. Meaning remains contested. No system, however powerful, escapes the need for interpretation. Rather than solving this reality, AI obscures it.

The lesson from intelligence history — and from contemporary AI research — is that interpretation cannot be automated away. Systems can surface signals, identify patterns, and reveal possibilities. They cannot own the consequences of acting on them.

Laplace’s demon promises certainty; SIGINT teaches restraint. The choice facing institutions deploying AI is which tradition they will follow.

From Listening to Hearing

We have built systems that listen everywhere. They monitor vast datasets, generate summaries, predict outcomes, and recommend decisions. The harder challenge is learning how to hear — to interpret with discipline, acknowledge uncertainty, and anchor every judgment in human accountability. The future of AI will be determined not by advances in model performance, but by whether institutions develop the interpretive discipline that intelligence organizations forged through decades of failure and near-catastrophe.

The stakes are existential. The next collision between AI and SIGINT may come when the Chinese PLA operates in the Taiwan theater. Will exercises in the Taiwan Strait be mistaken for all-out war? Will algorithmic pattern-matching mistake mobilization drills for invasion preparations? Will AI-enhanced intelligence systems produce seemingly confident assessments that obscure ambiguous signals? Our immediate challenge is not simply to make AI smarter but to make our institutions wiser — to establish interpretive discipline. Organizations using AI need structured processes borrowed from intelligence tradecraft like alternative analysis and red teaming.

Alternative analysis is a formal intelligence practice designed to prevent premature consensus. Rather than pushing analysts toward a single “best” explanation, it requires the deliberate generation and sustained consideration of multiple competing hypotheses drawn from the same underlying signals. Analysts are expected not only to state what they believe is happening, but to articulate credible alternatives and identify what evidence would support or falsify each one.

This discipline exists because intelligence organizations learned that agreement often emerges before understanding. Time pressure, institutional incentives, and persuasive signals can all drive early closure. Alternative analysis introduces friction into that process. It slows judgment at precisely the moments when confidence feels highest.

Applied to AI-enhanced systems, alternative analysis would mean resisting the impulse to treat model outputs as default interpretations. Instead of asking, “What does the system conclude?” institutions would ask, “What else could explain this pattern — and how would we know if the system is wrong?” Competing interpretations are not a failure mode; they are a safeguard.

Red teaming is the intelligence community’s way of institutionalizing adversarial thinking. Dedicated teams are tasked with challenging prevailing assessments by examining how an intelligent opponent might manipulate signals, exploit analytic blind spots, or deliberately induce misinterpretation. Where alternative analysis focuses on internal disagreement, red teaming assumes the environment itself is hostile.

In SIGINT contexts, red teams ask how signals could be staged, spoofed, or shaped to produce misleading patterns. They explore how an adversary might design activity specifically to trigger analytic models or reinforce existing assumptions. The goal is not prediction, but resilience.

Crucially, red teams are structurally independent. Their value depends on being insulated from institutional pressure and career risk. They are not rewarded for consensus, but for surfacing vulnerabilities early. In AI-enabled organizations, red teaming helps prevent systems from becoming confidently wrong by treating apparent clarity as something to be tested, not trusted.

Only then can we avoid the traps of misplaced certainty and build systems that support judgment rather than override it.

About the Author

Matthew Weiss is the co-founder and Chief Financial Officer at Circuit, which is building the world’s first company-centric knowledge network.